The difference between a capable AI agent and a truly effective one comes down to instructions. The model is the same. The context, workflow, and constraints you give it determine whether it produces generic output or work that meets a specific professional standard.

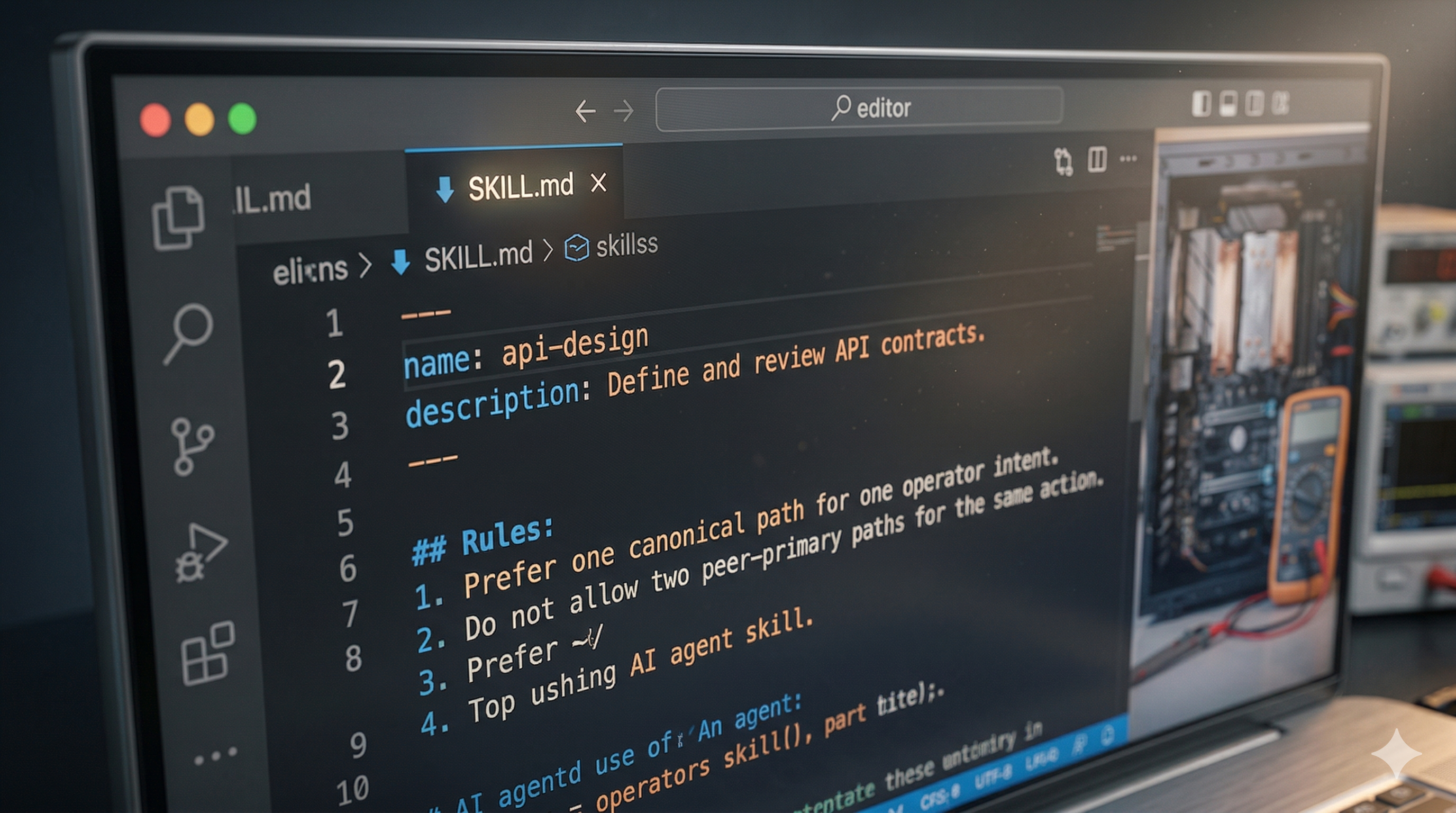

SKILL.md files are the configuration layer for AI agent specialization. They are plaintext Markdown files that tell an agent what domain knowledge to apply, what workflow to follow, and what constraints to respect. When an agent loads a SKILL.md file, it shifts from generalist to specialist for that task.

This article covers what SKILL.md files are, how they work, how to write effective ones, and where to find the growing directory of community-built skills you can use today.

What Is a SKILL.md File?

A SKILL.md file is a plaintext Markdown document that configures how an AI agent behaves for a specific task. It contains domain knowledge the agent needs, the workflow it should follow, and explicit rules about what to avoid. The agent reads the file at runtime and applies those instructions to everything it produces during the session.

The concept is straightforward. You write instructions in plain English, save them as a Markdown file, and the agent loads them when it starts working. There is no compilation step, no API integration, no deployment pipeline. Anyone who can write clear instructions for a capable colleague can write a SKILL.md file.

Each SKILL.md typically contains three core sections. The first is context: what domain the agent is operating in and what background knowledge to apply. The second is instructions: the step-by-step workflow the agent should follow from input to deliverable. The third is constraints: hard rules about what the agent must avoid, regardless of other instructions. Some files include a fourth section with examples of good output, which consistently produces the largest improvement in consistency.

The format has gained traction across Claude Code, GitHub Copilot, and other agent frameworks because it solves a real problem: the same model, given the same prompt, produces wildly different output depending on the context it has. SKILL.md files make that context explicit, repeatable, and shareable.

Why Skills Matter for Agent Quality

A foundation model without task-specific instructions draws on broad training data and makes reasonable but undifferentiated choices about tone, structure, format, and emphasis. The output is functional. It is rarely excellent for a specific use case.

A SKILL.md file for "SEO content writing" changes the equation entirely. It includes keyword placement rules, meta tag formulas, E-E-A-T requirements, content structure templates, and internal linking protocols. The same model, with this skill loaded, produces output that follows a publication's editorial standards and SEO strategy from the first draft.

The effect compounds across a team. When every agent session loads the same skill, output consistency improves across all contributors. New team members produce work that meets existing standards from their first session. Institutional knowledge about how to do a task well lives in the skill file rather than in one person's memory or a document that nobody reads.

This is the practical argument for investing time in writing good skills. A well-written SKILL.md pays for itself within a few uses, and every subsequent use is pure leverage.

Anatomy of a Good SKILL.md

Section 1: Context

The context section tells the agent what domain it is operating in and what background knowledge to apply. This is where you establish the agent's perspective, expertise, and awareness of your specific environment.

A strong context section is specific. "You are a content writer" is too broad. "You are an SEO content writer for a B2B SaaS blog targeting technical decision-makers who evaluate automation tools" gives the agent enough information to make good judgment calls throughout the task. The more specific your context, the fewer corrections you need to make downstream.

Section 2: Instructions

The instructions section defines the workflow. This is the step-by-step process the agent should follow, from receiving a task to delivering output.

Effective instructions read like a process document for a new hire who has deep general knowledge but zero context about your specific workflow and standards. "Write the outline" is a weak instruction. "Write a six-section outline with H2 headings that each target a secondary keyword, ordered by search intent from informational to transactional" gives the agent a clear specification to execute against.

The best instruction sections decompose the task into discrete steps with explicit quality criteria for each one. This prevents the agent from making assumptions about what "good" means at each stage.

Section 3: Constraints

The constraints section defines boundaries. These are the rules the agent must follow regardless of other instructions or context.

Constraints prevent the most common failure modes. "Never use em dashes" prevents a specific stylistic issue. "Never make claims without a cited source" prevents hallucination in research-heavy tasks. "Never exceed 1,500 words" prevents scope creep. Good constraints are specific, testable, and directly tied to failure modes you have actually observed.

At Automation Switch, our writing skill includes constraints like "No definition by negation" and "No em dashes." These came from editing rounds where we noticed patterns in AI-generated output that did not match our voice. Each constraint traces back to a real problem we encountered in production.

Section 4: Examples

The optional examples section shows the agent what good output looks like. Before-and-after pairs are particularly effective because they demonstrate both the quality bar and the type of transformation expected.

Examples anchor the agent's interpretation of your instructions. Without them, the agent relies on its own judgment about what "good" means. With concrete reference points, the agent calibrates its output to match your demonstrated standard. This section is optional, but teams that include it consistently report better first-draft quality.

Start With Constraints

If you are writing your first SKILL.md, begin with the constraints section. List three specific failure modes you have seen in AI output for this task. Those constraints immediately improve quality, and you can expand the instructions and context sections as you iterate.

Common Skill Categories

Content and SEO skills

Content skills handle the full writing pipeline: blog post drafting, meta tag generation, content brief creation, and internal link mapping. These skills encode a publication's editorial standards so that every piece of content follows the same structure, tone, and SEO requirements regardless of which agent session or team member produces it.

Code and development skills

Development skills cover code review, documentation generation, test writing, and refactoring patterns. A code review skill might specify which patterns to flag (N+1 queries, missing error handling, hardcoded secrets), what severity levels to use, and how to format the review output. These skills bring consistency to tasks that otherwise depend heavily on individual reviewer judgment.

Operations and analysis skills

Operations skills handle data analysis, report generation, process documentation, and audit frameworks. An automation audit skill, for example, defines the specific checks to run, the scoring criteria, and the output format for findings. These skills are particularly valuable because operations tasks tend to follow rigid, repeatable structures that are straightforward to encode. For a practical example, see What a Good Automation Audit Should Actually Include.

Outreach and sales skills

Sales skills manage cold email personalization, prospect research, and call preparation. A cold outreach skill defines the research steps to take before writing, the personalization signals to look for, and the email structure that converts. For the full outreach automation stack, see The Cold Outreach Automation Stack.

The Skills Directory

Community-built skills are emerging across multiple platforms. GitHub repositories contain hundreds of open-source SKILL.md files covering common development, content, and operations tasks. Claude Code plugins ship with curated skills for specific workflows. Other agent frameworks including GitHub Copilot and Cursor are building their own skill ecosystems with similar configuration patterns.

Skills are composable. An agent can load multiple SKILL.md files for a complex task, combining domain expertise from different skills simultaneously. A content production workflow might load an SEO skill, a brand voice skill, and a fact-checking skill at the same time. The agent synthesizes the instructions from all three into a unified workflow that satisfies every constraint.

The Automation Switch Skills Hub is a curated directory of production-tested skills for automation builders. Each skill includes the full SKILL.md source, usage documentation, and performance notes from real-world testing. The directory covers content creation, code review, data analysis, SEO optimization, and workflow automation.

Writing Your First Skill

- 01Start with the task, not the tool

Define the exact job the agent needs to do repeatedly. A good skill solves one recurring workflow cleanly instead of trying to be a general-purpose brain dump.

- 02Write explicit instructions and constraints

Tell the agent what success looks like, what to avoid, and what format to return. Clear boundaries are what make a skill reusable across runs and across teams.

- 03Add concrete examples

Show the input pattern and the expected output pattern. Examples reduce ambiguity far more effectively than extra prose.

- 04Test, tighten, then publish to the directory

Run the skill on real tasks, refine weak spots, then add it to the Skills Directory so the workflow is discoverable and repeatable for the rest of the team.

Start with a task you perform at least three times per week. The repetition gives you enough iterations to refine the skill quickly, and the time savings compound immediately.

Write the instructions as if training a very capable new hire who has broad knowledge but zero context about your specific workflow, standards, and preferences. Be explicit about the things you would normally explain verbally during onboarding: the quality bar, the common mistakes, the non-obvious requirements that only become apparent after someone gets it wrong.

Test the skill with a real task. Compare the output to your manual work. Note where the agent deviated from your expectations and add constraints or clarifications to address each gap. Most skills require three to five iterations before they produce consistently good output. That investment pays back on every subsequent use.

Once the skill works reliably, share it. The skills ecosystem grows when builders contribute their tested configurations back to the community. A skill that saves you thirty minutes per task will save the same time for everyone who uses it.

For a broader view of the automation tools and platforms these skills plug into, see Best Automation Tools for Small Businesses in 2026. For a comparison of two platforms where these skills can drive automated workflows, see Airtable vs Notion as an Automation Platform.